The secret is out: Kubernetes is the industry standard for container orchestration and its popularity is only increasing. According to a 2022 survey conducted by the Cloud Native Computing Foundation, 96% of IT professionals that responded are now either using or evaluating Kubernetes (K8s).

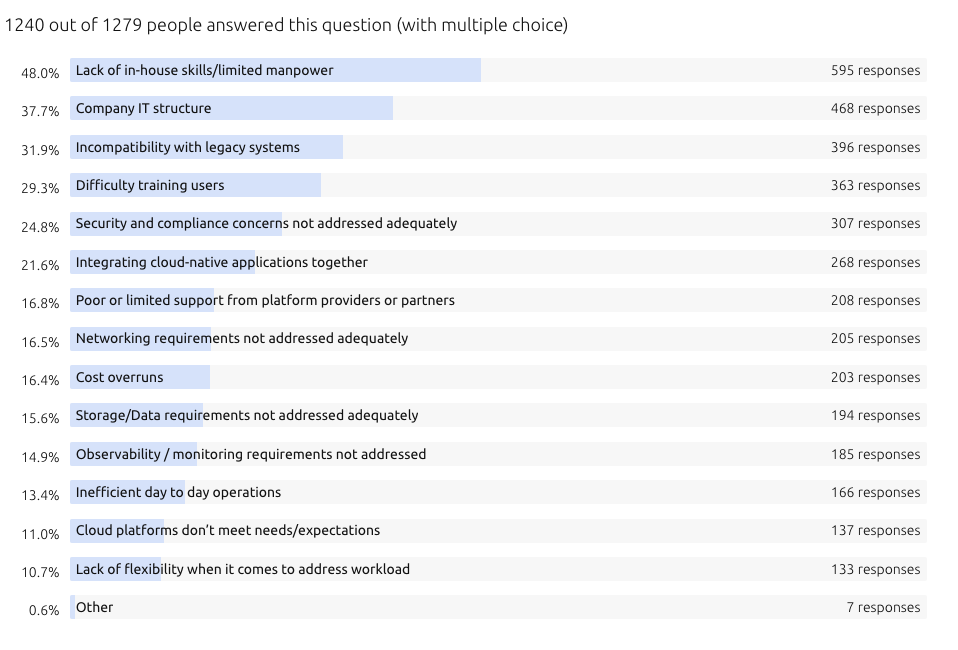

The benefits of Kubernetes are vast: elasticity/agility, resource optimization, reduced service costs, faster time-to-market, and cloud portability are all widely cited reasons for adopting it. However, Kubernetes is complex and its dynamic components (e.g., physical nodes, pods, containers, proxy, schedulers) make it a difficult system to adopt. As a result, organizations report that a lack of in-house skills is the biggest challenge when migrating to or using K8s.

Source: Canonical – Kubernetes and Cloud Native Operations Report 2022

Closing the Kubernetes Skills Gap

To address the staffing challenges that Kubernetes presents, many organizations turn to managed container services, such as AWS Elastic Kubernetes Service (EKS) or Azure Kubernetes Service (AKS). EKS and AKS both lessen the need for in-house talent by delivering cloud-native automation and governance, allowing application developers to go-to-market faster and significantly increasing productivity.

EKS and AKS present their own challenges though. If an organization has critical compliance mandates, and security requirements, or is using a hybrid cloud model, supplemental expertise and ISV tooling may be needed.

Working with a managed service provider (MSP) is a popular choice for closing the Kubernetes skills gap. An MSP can wholly manage your Kubernetes infrastructure or work as an extension of your existing IT team, providing valuable expertise that helps you achieve your business goals.

See how Logicworks helps you build a secure and resilient Kubernetes environment.

Simplify Deployment Through Automation

A key benefit of Kubernetes is that application deployments and updates can be automated. Instead of having to shut any components down when a deployment occurs, your CI/CD pipeline is truly continuous in a Kubernetes environment. Applications and updates can be moved seamlessly between testing and production environments with the push of a button. Rolling updates can be performed without downtime.

Whether your Kubernetes nodes are on the public cloud or on-premises, container deployment is scheduled and automated across multiple compute nodes. This feature significantly reduces time-to-market and improves developer efficiency.

Advanced Monitoring

Kubernetes is an extremely flexible system, allowing integrations with multiple monitoring tools. Understanding your CPU and RAM utilization, network latency, and disk I/O is critical to maintaining your K8s environment. Depending on your needs and budget, your platform can be configured to provide granular insight into the health of your clusters.

The metrics collected by your monitoring tools are used to autoscale your Kubernetes resources, keeping your environment rightsized for cost efficiency and high availability.

Keeping Clusters Secure

While cloud-native tooling offers security features that protect your Kubernetes environment, ISV tools and admission controllers offer an extra layer of protection for your K8s clusters. Tools from popular security ISVs (such as Alert Logic and Trend Micro) run scans and collect information from your containers to detect threats and generate alerts for anything deemed suspicious or malicious. Your security rules can be customized to ensure continuous compliance and monitor container activity that may violate your rules.

An admission controller (e.g., Kyverno) can be used to create security policies at the API level. If an API request does not meet the security requirements, the request is disallowed. These policies can validate, mutate, and generate Kubernetes resources, as well as ensure OCI image supply chain security. Software supply chain attacks have increased significantly in recent years, so securing your supply chain in Kubernetes is now more critical than ever.

Cost Optimization

Cutting monthly costs is a top priority for nearly every cloud customer. The wide rate of Kubernetes adoption directly reflects that concern, as its infrastructure is inherently cost optimized when configured correctly. A few ways that K8s keeps costs in check include:

- Lower operating costs through automation

- Expanded cloud backup options

- Advanced monitoring for predicting usage costs

- Efficient resource management

- Faster time-to-market

On a technical level, Kubernetes resources can be tagged to show cost usage on a granular level. A FinOps solution can help you understand your cluster resource utilization, evaluate if your clusters are configured to support your workload, and identify areas of waste or underutilization.

To learn more about how Kubernetes can generate cost savings through automation and monitoring while providing high levels of security and stability, contact a Logicworks expert.

About Logicworks

Logicworks helps customers migrate, run, and operate mission-critical workloads on AWS and Azure with security, scalability, and efficiency baked in. Our Cloud Reliability Platform combines world-class engineering talent, policy-as-code, and integrated tooling to enable customers to confidently meet compliance regulations, security requirements, cost control, and high availability. Together with our team of dedicated certified engineers and decades of IT management experience, we ensure our customers’ success across every stage of the Cloud Adoption Framework.

No Comments